When AI assistants get access to your data warehouse, most teams assume the hard part is solved. Plug in the Model Context Protocol, let the language model query Snowflake, and watch the insights flow. But the accuracy problems start almost immediately: wrong revenue numbers, mismatched attribution, metrics that mean something different depending on who defined them. Reliable AI analytics requires more than raw data access it requires a system that tells the AI what the data actually means. That system is the semantic layer. MCP provides the transport; the semantic layer provides the knowledge. Together, they form the architecture that makes AI answers deterministic and trustworthy.

This guide covers how MCP and semantic layers work individually, why each is incomplete without the other, and what the full reference architecture looks like for ecommerce teams building AI analytics workflows today.

A Quick Primer on MCP (Model Context Protocol)

MCP is an open protocol, built on JSON-RPC, that standardizes how AI agents access external tools and data sources. If you have ever had to build a custom integration to connect an AI assistant to your data warehouse, you have felt the M×N problem: M different AI models multiplied by N different data sources equals an explosion of custom code. MCP solves this by providing a universal interface.

Think of it like USB-C. Before USB-C, every device needed its own cable. USB-C collapsed those connections into one standard. MCP does the same for AI: instead of building unique connectors for every model-to-data-source combination, MCP provides a standardized interface. AI model vendors build one MCP client. Data source vendors build one MCP server. The protocol handles communication between them.

Anthropic built MCP, but the protocol is open not tied to Claude or any specific model. By mid-2025, most major data warehouses (Snowflake, BigQuery, Databricks) and a growing number of BI and analytics platforms had shipped MCP server implementations. Claude Desktop, Cursor, Windsurf, and ChatGPT all support MCP as clients.

What MCP provides: standardized tool discovery, tool invocation, and data retrieval. An AI agent connected via MCP can ask a data source what resources are available, call those resources with parameters, and receive structured data back. The transport layer is a clean, well-defined interface that replaces ad-hoc API integrations.

What MCP Does Not Solve

MCP handles access. It does not handle meaning. In analytics, meaning is the hard part.

Access Without Understanding

MCP lets an AI assistant query your Snowflake instance. It does not tell the AI what the data means. Consider: "What was our revenue last month?" MCP allows the agent to connect and run queries against the orders table. But the AI does not know whether to use "total_price," "subtotal_price," or another field. It does not know whether "revenue" includes refunds or excludes cancelled orders. It does not know whether test orders should be filtered out.

The agent has data access. It lacks semantic context.

The Metadata Gap

Raw database schemas do not carry business knowledge. A column named "rev_net_adj_v2" tells you nothing about what metric it represents, how it is calculated, or what business logic it applies. When an AI reads schema metadata, it sees field names and data types not that "orders.total_price" is gross revenue before refunds, that "orders.subtotal_price" excludes shipping, or that "orders.financial_status" determines whether an order has been paid.

This metadata gap is where LLM hallucinations thrive. Language models are excellent at pattern matching, but they cannot reliably infer business-specific semantics from raw schema information.

Why This Matters for Ecommerce

Ecommerce data is especially prone to the metadata gap because it spans multiple platforms, each with their own conventions:

- Shopify's "total_price" includes shipping and taxes

- Meta's "reported_revenue" only counts conversions Meta can track

- Google Ads "conversion_value" uses last-click attribution with its own window

- Klaviyo's "revenue" includes only orders attributed to email campaigns

"Revenue" has four different definitions across four platforms. MCP gives AI access to all four sources. It does not reconcile them. Without a semantic layer, every query is a potential source of inconsistency.

The Semantic Layer: The Missing Piece

A semantic layer addresses exactly the problem MCP leaves unsolved. If MCP is the road network, the semantic layer is the GPS plus traffic rules. It tells AI agents where they can go, how to get there, and what the destination means.

What the Semantic Layer Provides

Metric definitions. Every metric is defined with an exact formula, specific data sources, and calculation rules. "Revenue" is not an ambiguous column name it is a defined metric: sum of gross sales minus returns and discounts from Shopify orders, excluding test orders, for the selected date range. This single definition eliminates an entire class of LLM errors.

Entity relationships. The semantic layer defines how entities relate: orders belong to customers, customers have multiple orders, campaigns generate sessions that generate orders. These relationships matter for correct query construction.

Synonym mapping. The layer knows that "AOV," "average order value," and "average basket size" all refer to the same metric. Natural language variations resolve consistently regardless of phrasing.

Certification signals. Some metrics are certified as authoritative; others are marked as estimates. The layer tells AI which numbers to trust for which decisions.

Access controls and governance. Row-level security, team-level permissions, and data masking are managed in the semantic layer ensuring AI agents respect the same governance policies as human analysts.

Error handling. A well-built semantic layer returns structured errors when a query is out of scope, rather than producing approximate or hallucinated answers. This is what distinguishes production-grade AI analytics from prototype demos.

Semantic Layer as a Business Context API

The cleanest way to think about a semantic layer in the MCP context: it is a business context API. Rather than exposing raw database tables through MCP, you expose semantic layer operations as callable tools. AI assistants call pre-defined metrics, dimensions, and filters rather than writing SQL against raw schemas.

When you ask "What was ROAS last month?" the MCP request is not SELECT SUM(revenue) / SUM(ad_spend) FROM .... It is CALL metric('blended_roas', date_range='last_month'). The semantic layer handles the SQL generation against governed definitions. The result is deterministic: the same question always returns the same calculation.

This is the core reason the semantic layer makes AI analytics reliable. It transforms open-ended LLM text generation into deterministic, governed retrieval.

The Architecture: How MCP + Semantic Layer Work Together

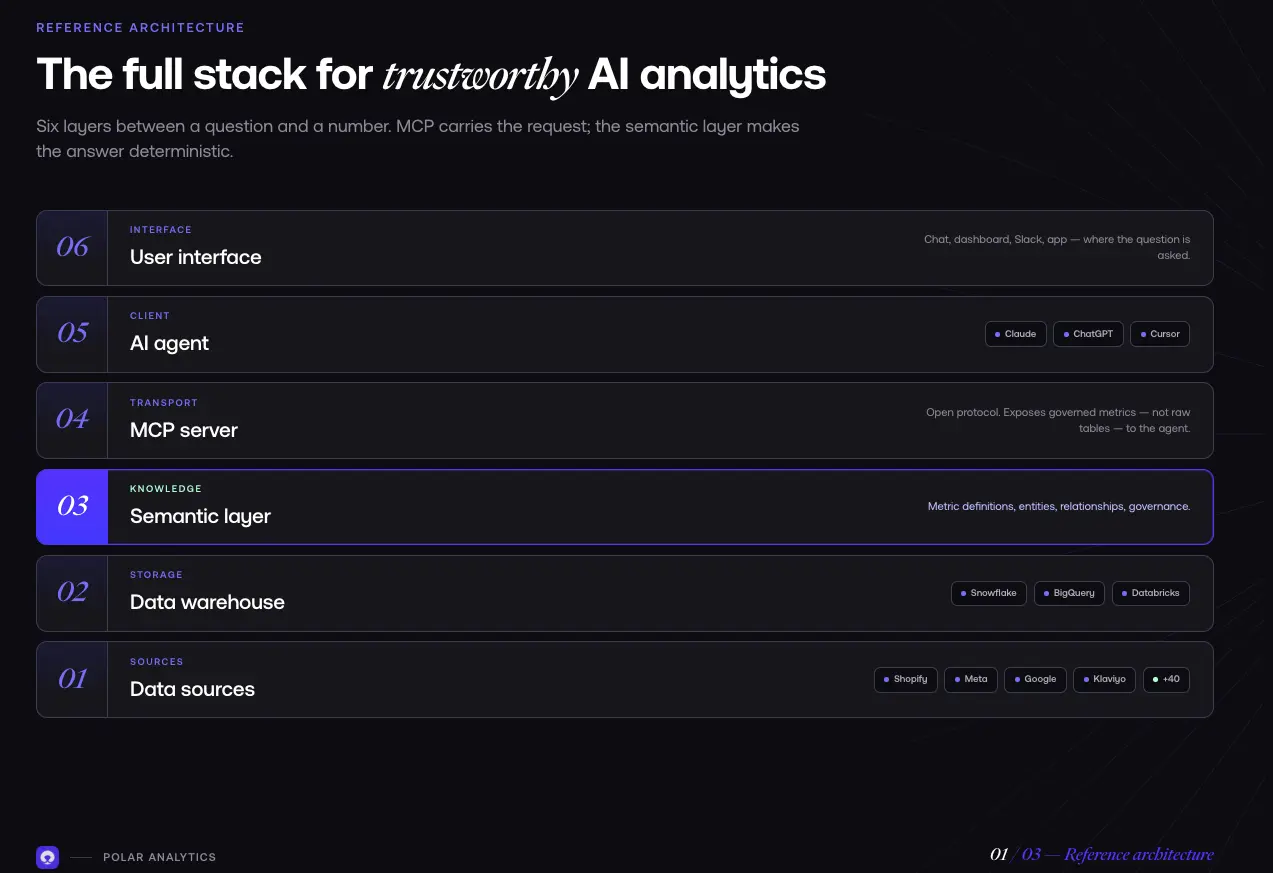

Reference Architecture

The full stack for trustworthy AI analytics:

Layer 1: Data sources. Shopify, Meta Ads, Google Ads, TikTok Ads, Klaviyo, Recharge, Stripe. Each source has its own schema, metric definitions, and API conventions.

Layer 2: Data warehouse. Snowflake, BigQuery, or Databricks. Raw data is extracted, transformed, and loaded from all sources. The warehouse stores data but does not enforce business meaning.

Layer 3: Semantic layer. The business context layer on top of the warehouse. Defines all metrics, entities, relationships, and governance policies. This is where business knowledge lives.

Layer 4: MCP server. Exposes semantic layer operations to AI agents through the MCP protocol. Rather than exposing raw warehouse tables, the MCP server exposes governed metrics and dimensions. Agents can only call operations the semantic layer has defined and certified.

Layer 5: AI agent. Claude, ChatGPT, or any MCP-compatible assistant. Acts as the client connecting to the semantic layer through the MCP interface. Operates within the structured context the semantic layer provides rather than writing ad-hoc queries.

Layer 6: User interface. Chat interface, dashboard, Slack bot, or app. The human-facing layer where users ask questions and receive answers.

The Query Flow

- User asks a question: "What was blended ROAS by channel last month?"

- AI agent parses the intent: Identifies the relevant metric (blended ROAS) and dimensions (by channel, last month).

- Agent calls the semantic layer via MCP: Instead of writing SQL, the agent sends: metrics.blended_roas(channel_breakdown=true, date_range='last_month').

- Semantic layer generates governed SQL: It knows that blended ROAS equals net revenue divided by ad spend across all channels. It generates optimized SQL, applies all filters, and joins necessary tables.

- Warehouse executes and returns data.

- AI formats the response: Structured data with clear metric definitions, formatted into a human-readable answer.

The AI never wrote SQL against a raw table. It called a governed operation through the MCP transport layer.

Why This Architecture Prevents Hallucination

Three properties make AI answers deterministic:

The agent does not write SQL. It calls pre-defined operations. There is no SQL generation step where the language model can introduce semantic errors.

The semantic layer constrains what is possible. The AI cannot invent metrics. If "blended ROAS" is not defined in the semantic model, the agent cannot query it.

Out-of-scope questions return errors, not guesses. If you ask for a metric that is not defined, you get a clear error not a hallucination dressed up as a confident answer.

This is the difference between AI analytics on raw LLM queries and AI analytics on a semantic layer: the former depends on the model getting it right; the latter makes it structurally difficult to get it wrong.

dbt and the Semantic Layer Ecosystem

For teams already using dbt for data transformation, the dbt Semantic Layer is an increasingly important piece of the MCP ecosystem. Built on MetricFlow (open-sourced under Apache 2.0), dbt's Semantic Layer went GA in 2025 and exposes governed metrics through SQL and GraphQL APIs. A growing number of MCP server implementations can connect to it directly.

A dbt MCP server translates natural language queries into governed MetricFlow requests. The workflow is familiar: define metrics in dbt, validate them through the dbt CLI, and host them through an MCP-compatible service. For teams with existing dbt investments, this is often the fastest path to governed AI analytics without rebuilding the data stack.

Cube, AtScale, and other semantic layer platforms also offer MCP server implementations, expanding the ecosystem of tools that can serve as the governed layer between your warehouse and your AI agents.

The practical implication: whatever semantic layer technology your organization adopts, MCP compatibility is now a standard requirement.

The Open Semantic Interchange (OSI) Standard

In January 2026, the Open Semantic Interchange (OSI) v1.0 specification was published. OSI is a vendor-neutral format for encoding semantic layer definitions metric definitions, entity relationships, and governance policies so they can be shared across tools and environments. The founding partners include Snowflake, Databricks, AtScale, and Salesforce.

OSI matters because it addresses a practical problem: if you define your semantic model in one tool, can you move it to another? OSI provides a portable format for semantic definitions that works across vendors. However, the specification is still early: v1.0 is published, but Phase 2 native platform support across the founding partners is roadmapped for Q2–Q4 2026 and has not shipped yet. Growing support from major vendors is a strong signal, but widespread implementation is still ahead.

OSI connects to MCP through a developing ecosystem of MCP servers that understand OSI-formatted definitions. Rather than hardcoding metric definitions into every MCP server, organizations will be able to maintain definitions in OSI format and expose them through any OSI-compatible MCP server. This is an important direction for teams managing multiple AI systems but it is an emerging standard, not yet a production-ready ecosystem.

How Polar Implements This Architecture

Polar Analytics implements the MCP + semantic layer architecture specifically for ecommerce. Polar MCP connects Claude, ChatGPT, or Cursor directly to a managed semantic layer that unifies Shopify, Meta Ads, Google Ads, TikTok, Klaviyo, Recharge, Stripe, and 40+ other sources into one governed model.

Every metric is pre-defined and certified: ROAS (blended and by channel, with Shapley-based attribution recovering the conversion signal iOS and Safari strip), LTV (cohort-based with repeat purchase analysis), CAC, AOV, contribution margin, MER, new vs. returning customer splits. The semantic layer sits on dedicated Snowflake instances one per customer, fully isolated.

Ask Polar, the built-in AI analyst, queries this semantic layer through the MCP protocol no raw SQL, no metric ambiguity. Role-based access controls are enforced at the semantic layer, ensuring every AI query respects the same governance rules as human analysts. Implementation takes hours. No warehouse provisioning, no dbt, no data team required.

What Data Teams Should Do Now

Audit Your Current AI Analytics

If your AI tool connects directly to your warehouse without a semantic layer, you are relying on the language model to infer business knowledge from raw schemas. That is where accuracy breaks down. The key signal: can your AI explain exactly how it calculated the number it just gave you?

Evaluate MCP Readiness

Check whether your data warehouse, BI tools, and analytics platforms support MCP. If they do not yet, ask when they will. MCP compatibility is now a requirement for AI-ready data infrastructure. Teams that build on MCP-compatible tools today will have significantly more flexibility as the ecosystem matures.

Start with Governed Metric Definitions

Before selecting AI models or building AI features, invest in your metric definitions. Define your core 10–20 metrics revenue, ROAS, LTV, CAC, AOV, conversion rate with exact formulas and data sources. This is the foundation everything else builds on. Without it, every AI query is a potential source of inconsistency.

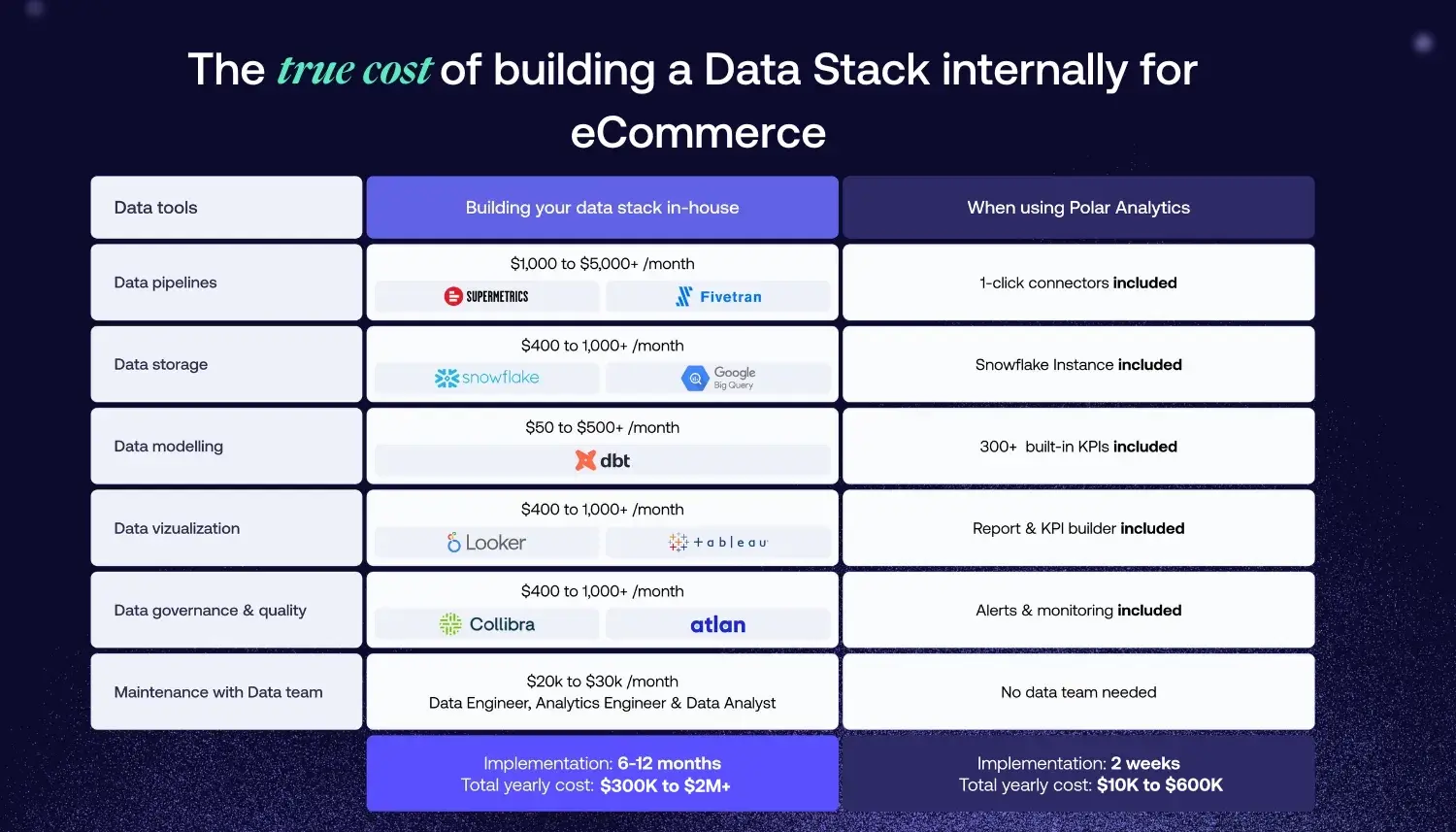

Consider Build vs. Buy

Building a semantic layer from scratch is a significant investment. Maintaining it keeping definitions current as your business and data sources evolve is an ongoing commitment. For most ecommerce teams under 50 people, a managed service like Polar is the right starting point. For larger organizations with dedicated data engineering, building your own semantic model may make sense. Either way, the key is ensuring the layer is actively maintained stale definitions are almost as dangerous as having none.

%201.svg)