Your BI tool has a semantic layer built in. So why would your data team need a separate one?

That is the question at the heart of the universal vs. BI-native semantic layer debate. Both approaches provide a layer between your underlying data and your analytics tools. Both aim to make business metrics consistent and data governance possible. But they differ in one fundamental way: where the semantic layer lives, and who controls it.

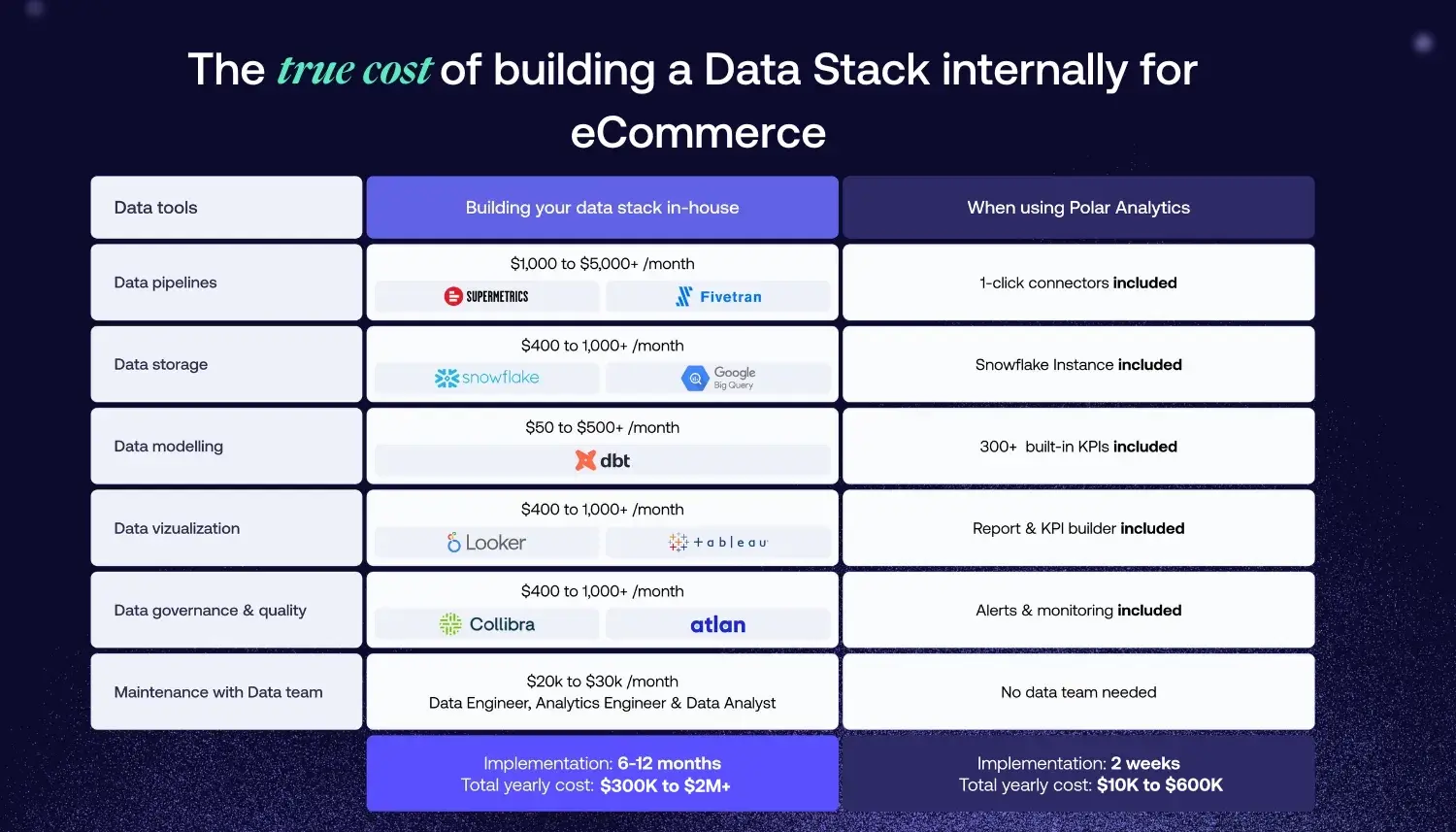

Understanding this difference is critical for ecommerce teams making data stack decisions. The wrong choice leads to vendor lock-in, data inconsistency across tools, and expensive migrations later.

What Is a Universal Semantic Layer?

A universal semantic layer is an independent layer that sits between your data warehouse and all the systems that consume data from it. It is not tied to any specific BI platform. It centralizes your business rules in one place and serves them to every consumer: dashboards, AI agents, spreadsheets, custom applications, and APIs.

The key characteristic is vendor independence. Your business logic and metric definitions live in a solution you own and control. You can connect new BI platforms, swap out old ones, and add AI integrations all without rebuilding your metrics.

Think of it like a recipe book. The recipes contain your business rules: what "revenue" means, how to calculate ROAS, what constitutes a "new customer." Any chef in your kitchen any consuming application can use the recipe book to produce consistent results. The recipes do not live inside one specific appliance.

What Is a BI-Native Semantic Layer?

A BI-native semantic layer is a semantic model that lives inside a specific BI tool. That tool owns the business rules and metric logic. You define metrics using the tool's proprietary language (LookML in Looker, DAX in Power BI), store them in the tool's data model, and access them through the tool's interface.

This works well within that single system. The problem is what happens when you step outside it and for most growing businesses, stepping outside is inevitable. The moment you add a second BI tool, an AI agent, or a custom application, you face a choice: define the metrics again in the new system, or accept inconsistent data across your organization.

Head-to-Head Comparison

When BI-Native Wins

BI-native semantic layers are the right choice in specific scenarios and they have real advantages that universal approaches cannot fully replicate.

Single-Tool Environments

If your organization uses exactly one BI tool and has no plans to change, BI-native makes sense. The semantic layer inside Looker, Power BI, or Tableau is deeply integrated with the platform's visualization and query engine. Business users get a smooth native experience with faster time to insight for straightforward use cases.

Simpler Query Path

A BI-native semantic layer queries data through a path optimized for that specific tool's engine. A universal semantic layer adds an intermediary that every query must pass through, which can introduce latency, add a point of failure, and create debugging complexity when numbers look wrong. Is the issue in the warehouse, the semantic layer, or the consuming tool? With a BI-native approach, there is one fewer layer to investigate. For teams with simple stacks and performance-sensitive workloads, this is a meaningful advantage.

Early-Stage Teams with Simple Needs

A team using Shopify analytics, Google Analytics, and one BI dashboard does not need a universal semantic layer yet. The overhead of a separate solution outweighs the benefits when you have two or three data sources and a small team.

Deep BI-Specific Workflows

Some organizations depend heavily on BI-specific features: complex row-level security in Looker, advanced DAX calculations in Power BI, or native visualization workflows in Tableau. Migrating these away from the BI tool's semantic layer can be expensive and impractical. Enhancing the existing model is often more pragmatic than replacing it.

When Universal Wins

The independent semantic layer wins in the scenarios that characterize most growing ecommerce brands.

Multi-Tool Data Stacks

The moment you use more than one BI tool, one AI interface, or one custom application, you have a multi-tool stack. A universal semantic layer serves consistent data to every consumer. A BI-native approach requires rebuilding definitions in each system and keeping them synchronized.

For ecommerce brands, multi-tool is the default. Shopify + GA4 + Meta + TikTok + Klaviyo + a BI tool + an AI chatbot = six systems consuming the same underlying data. A universal semantic layer is the only architecture that ensures consistency across all of them.

Growing Organizations with Multiple Personas

A five-person team might all use the same dashboard. A fifty-person organization has executives who need board-level metrics, marketers who need channel-level ROAS, ops teams who need fulfillment data, and finance who need P&L figures. Each department needs a different view of the same underlying data, but every view must use consistent definitions.

A universal semantic layer serves all these users through different tools without duplicating definitions. A BI-native approach requires either building every view inside one tool, or accepting that different departments see different numbers.

AI and Agentic Use Cases

AI tools and LLMs need programmatic access to governed data. They do not log into your Looker dashboard. They query APIs. A universal semantic layer provides that access natively. A BI-native approach requires building a custom API layer on top of the BI tool a significant engineering investment.

Modern data architectures are moving toward AI-ready, API-first designs. Cube, dbt, and AtScale all support universal semantic layers precisely because agentic workflows need governed data access that does not depend on a human navigating a BI interface.

Ecommerce Brands with Channel Sprawl

Ecommerce brands running Shopify + Google Ads + Meta Ads + TikTok Ads + Klaviyo + Recharge + warehouse and fulfillment tools have more diverse data sources than any single BI tool's semantic model can consistently govern. A universal approach maps all these sources into a unified view where revenue, ROAS, and LTV are each defined once and served to every consumer.

The Tradeoffs of Universal Semantic Layers

Universal semantic layers are not without cost. The added intermediary layer introduces real tradeoffs that data teams should evaluate honestly.

Query latency. Every query passes through an additional layer between the warehouse and the consuming application. Mature platforms mitigate this with pre-aggregation and caching, but the intermediary is still there. For sub-second dashboard interactions on simple queries, a BI-native path may be faster.

Debugging complexity. When a number looks wrong, there are now three places to investigate: the warehouse, the semantic layer, and the consuming tool. With a BI-native approach, the surface area is smaller. Teams adopting a universal layer need clear observability into query translation and lineage.

Upfront investment. Managed platforms reduce setup time significantly, but custom builds on dbt or Cube require weeks to months of engineering work comparable to or greater than setting up a BI-native model. The payoff comes over time as the number of consuming tools grows, but the initial investment is real.

These tradeoffs are manageable for most multi-tool environments, but they are worth understanding before committing to a migration.

Data Governance: Where the Gap Is Most Visible

With a BI-native approach, governance lives in the tool. Access controls, privacy policies, and security rules must be defined separately in each platform. When your business uses Looker, Power BI, and a custom application, you have three sets of governance policies to maintain.

A universal semantic layer centralizes governance. You define who can access what data once, at the semantic layer. Every system that queries the layer inherits those controls automatically. For ecommerce businesses managing customer data across Shopify, Meta, Google, and email platforms, centralized governance is essential for compliance and data quality.

The Ecommerce Decision Matrix

Choose BI-native if:

- You use exactly one BI tool and will for the foreseeable future

- Your team is under five people with simple analytics needs

- Your data sources are limited to two or three systems

- Query performance on simple dashboards is your top priority

Choose universal if:

- You use multiple BI tools, AI interfaces, or custom applications

- You have more than three data sources

- You run paid ads across multiple platforms

- Your organization has multiple teams with different analytics needs

- You are implementing or planning AI analytics

- You need consistent data across teams, tools, or external partners

How Polar Analytics Approaches the Semantic Layer

Polar Analytics is a managed universal semantic layer built for ecommerce independent of any BI platform. It connects 45+ native data sources (Shopify, Meta, Google, TikTok, Klaviyo, Recharge) and pre-loads ecommerce metric definitions: ROAS, LTV, CAC, AOV, contribution margin, MER, repeat purchase rate. Every metric is defined once; every dashboard, report, and AI query pulls from the same source of truth.

A first-party server-side pixel and Shapley-based attribution model recover the iOS/Safari signal stripped from Meta and Google. Ask Polar answers plain-English questions using governed definitions instead of ad-hoc SQL. Setup takes hours, not quarters.

%201.svg)